Enterprise AI Agents need on-boarding

Enterprise AI Constitution, an open standard for giving AI organizational identity before it gets a user prompt.

Website: constitutionbuilder.ai can help you create your first corporate AI constitution as well as provide you with guidance on how to deploy it.

Note: If you find value in the framework or can help validate deployment methods outside of claude code please visit the repo and open an incident with your experiences so we can update the documentation accordingly.

GitHub: enterprise-ai-constitution

That shiny new AI you were told to deploy has no idea what your company does, what regulations govern it, what data classifications exist, or which decisions require a human in the loop. Organizations deploying AI agents and chat interfaces seem to be missing a crucial step, on-boarding their new AI employees. You are essentially doubling your workforce over night, how can you trust these new employees are making the right decisions on behalf of your company? It will do whatever the person using it asks, confidently (maybe over confidently at times) and at speed, with no awareness of whether the output aligns with your organization’s standards, risk tolerance, or legal obligations.

Every enterprise AI deployment makes the same un-examined bet: that the employee using it will supply the organizational context the AI needs to act responsibly, and that the AI they are deploying with in the organization will make responsible decisions on behalf of the organization that is it serving.

This process opens the end user as well as the organization up for failure. Not because the end user is negligent. Because they don’t know what they don’t know. And in most cases the AI won’t push back. It’s optimized to be helpful, which in practice means it agrees with the user, reinforces assumptions, and builds confidently on premises it was never asked to validate.

What Is an AI Constitution?

An AI constitution is a system-level governance document, deployed before the user ever types a prompt, that tells the AI:

Who it works for: the organization’s legal identity, structure, and operating regions

What the organization does: business activities, client types, and why the work matters

What rules govern it: regulatory frameworks, contractual obligations, compliance requirements

What it’s allowed to do: and what it’s explicitly not allowed to do

How to handle data: a tiered classification system with specific handling rules per tier

What behavior is non-negotiable: confidentiality, IP protection, adversarial code review, irreversible action confirmation

What to watch for: personal misuse, data exfiltration patterns, security bypass attempts, prompt injection, sycophantic drift

How to say no: clear, non-punitive refusals that cite the rule and offer an alternative

It’s an assertion of your companies constitutional authority that supplies key principals before all other instructions are received by the AI agent. This sets the playing field for all other actions the AI takes or is requested to take in the future. This gives the AI the context needed to know when it is being asked to do something that is not ethical, or that violates data privacy, or that could be harmful to the people and systems they are charged with helping. Coupled with the appropriate level of logging and analysis you can validate that the AI is operating as expected and that it is not being misused, or exploited to act counter to your companies states goals and alignments.

What the Standard Contains

The Enterprise AI Constitution Standard is organized into 9 sections:

Identity: Who the AI is and who it represents

Organizational Context: What the organization does, who it serves, what regulations apply

Authority Limits: What the AI is and is not authorized to do

Data Classification: A 5-tier system (Public, Restricted, Confidential, Highly Confidential, Regulated) with handling rules per tier

Behavioral Mandates: Non-negotiable rules covering confidentiality, IP protection, adversarial code review with software supply chain awareness, irreversible action confirmation, external communication review gates, and brevity in enforcement

Misuse Detection: Patterns the AI must flag, including personal use during work hours, data exfiltration, security control bypass, credential access, prompt injection, inappropriate use as a subjective decision-maker, and sycophantic behavior

Refusal Logic: How to refuse clearly, with rule citation, with an alternative, without accusation. Resistant to urgency, seniority, and false exception claims.

Scope Limitations: What the AI is not. Not legal counsel, not a policy library, not a personal assistant.

Integrity Verification (optional): Cryptographic hashing per section with a Merkle root to detect tampering

Each section includes template language, implementation guidance, and common pitfalls.

Early Results

I started building on the idea while consulting for one of my clients that was in the middle of deploying Claude code to the developers in their organization. They were taking all the appropriate steps to securing the deployment using the Claude config, defining the sandbox usage, segmenting AI traffic, controlling token inference via corporate bedrock, and vertex endpoints. All while realizing that something was still missing. It dawned on me that the nervous energy was partly due to the fact the AI is just a broad knowledge repository guessing with only the most recent context on what should be the next token, or if an idea is good or not. With out reigning in the AI it will provide you with unwavering support in building that next app that no one asked for.

This company with approximately 8,000 employees and over 100 active AI initiatives took the concept of the AI Constitution and ran with it. Within 48 hours, roughly 50 adapted versions were circulating across their engineering organization. Teams that had been struggling to define AI governance guardrails suddenly had a concrete, deployable artifact they could customize and incorporate within their projects.

The innovations that emerged from real-world use were things I hadn’t anticipated:

Integrity verification. The security team added SHA-256 hashes to each section and a Merkle root on the document, creating a tamper-detection mechanism for constitutions distributed via MDM.

Anti-sycophancy directive. An explicit instruction acknowledging that the AI is reward-optimized for user satisfaction, and directing it to surface when it’s drifting toward agreement at the expense of organizational interest.

Scorer of Record protection. A rule preventing the AI from serving as the decision-maker in subjective evaluations (performance reviews, hiring, financial suitability). This was prompted by managers attempting to use AI as an objective arbiter for inherently subjective decisions.

Software supply chain awareness. Expanding adversarial code review to identify risks not just in code being written, but in the dependencies surrounding it.

Structured refusal logging. Tagging refusals with searchable categories so security teams can aggregate patterns without reading full transcripts.

The concept resonated because it was simple, immediately deployable, and didn’t ask much of the teams adopting it. As their security lead put it: “It’s strange that this isn’t already happening.”

The Layered Context Model

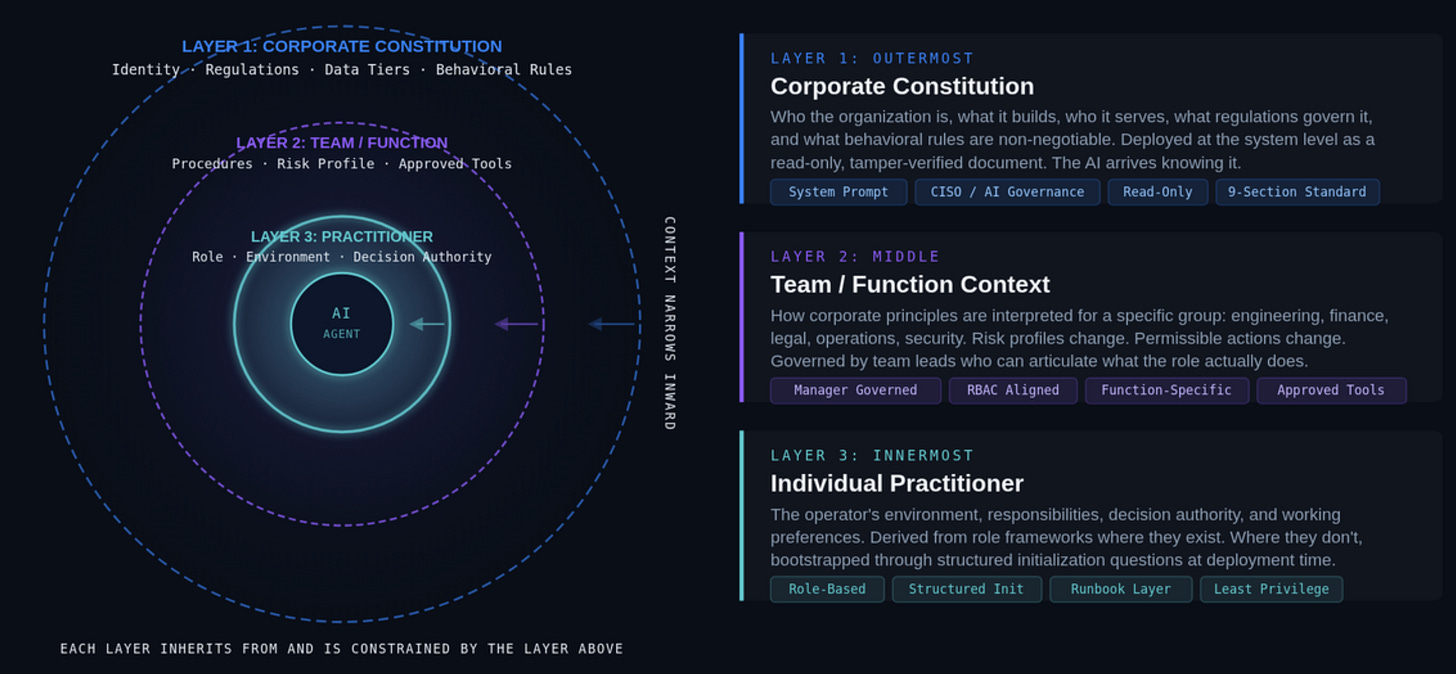

The constitution is designed as the outermost layer of a governance model we call the Enterprise AI Constitution:

Corporate (outermost): The constitution. Organization-wide, read-only, non-negotiable.

Team (middle): Team-level addenda that interpret corporate rules for a specific function. Governed by team leads. Can and is used to fill in team level objectives, standards, and environments the team operates with in.

Practitioner (innermost): Individual context covering the operator’s role, systems, decision authority, and working preferences.

Each layer narrows the scope. Each layer builds on the one above, providing your workforce with an AI Agent already aligned with your company and team goals before they make their first request.

This maps directly to how governance already works in regulated industries. Corporate policy sets boundaries. Procedures interpret them for functions. Runbooks tell practitioners what to do. AI is the perfect candidate to receive this context so it can advocate on behalf of the organization when helping a user reply to an email. Research a new product or market ensuring adherence the the same standards and business practices your company agreed to uphold to his board, or shareholders.

The corporate layer alone is a significant improvement over the status quo. You don’t need all three layers to start. Deploy the constitution first. Add layers as your governance matures.

The Standard Is Open

We’re releasing the Enterprise AI Constitution Standard as an open, public resource. It includes:

The 9-section standard with template language, implementation guidance, and pitfall documentation

Ready-to-use templates for corporate, team, and practitioner constitutions

A 24-scenario test suite for validating your constitution’s behavioral outcomes

An anonymized real-world example from an industrial manufacturing context

Documentation on the Context Onion framework and enterprise deployment

The standard is licensed under CC BY 4.0. Free to use, adapt, and build upon for any purpose, including commercial, with attribution.

Website: constitutionbuilder.ai GitHub: enterprise-ai-constitution

This is version 1.0. It reflects real-world deployment and validation, but the framework is evolving. We’re actively seeking feedback from organizations deploying constitutions in production. What works, what breaks, what’s missing.

If you’re using this framework or considering it, visit constitutionbuilder.ai to get started, or head to GitHub to open an issue, submit a PR, or start a discussion.

I am an OT cybersecurity consultant specializing in ICS risk assessments, corporate governance, architecture reviews, and compliance gap assessments for critical infrastructure environments. If you made it this far feel free to talk to me about Bonsai, Whiskey, or being a parent, all things that consume my life outside of Cyber security.